Reducing Misrepresentation in Life Insurance

Improving disclosure accuracy through clarity, trust, and thoughtful UX

Misrepresentation is a major cost driver in life insurance. I led a cross-functional initiative to reduce unintentional misreporting through clarity and trust, not friction, resulting in a 12.9% improvement in answer accuracy, 10.5% more policies issued, and slippage falling below our 18.6% target.

Introduction

Life insurance applications require detailed health and lifestyle disclosures. Even minor misunderstandings can create misrepresentation-driving pricing inaccuracy, higher underwriting cost, and downstream operational risk.

This case study covers how I partnered across underwriting, compliance, data science, and engineering to reduce misrepresentation while increasing user trust and conversion.

The Challenge

- Misrepresentation drove significant downstream cost and a rising slippage trend.

- Most inaccuracies were unintentional, caused by unclear wording or ambiguous inputs.

- Existing flows added friction but didn't improve accuracy or truthfulness.

Phase 1 - Study

Identifying the Problem

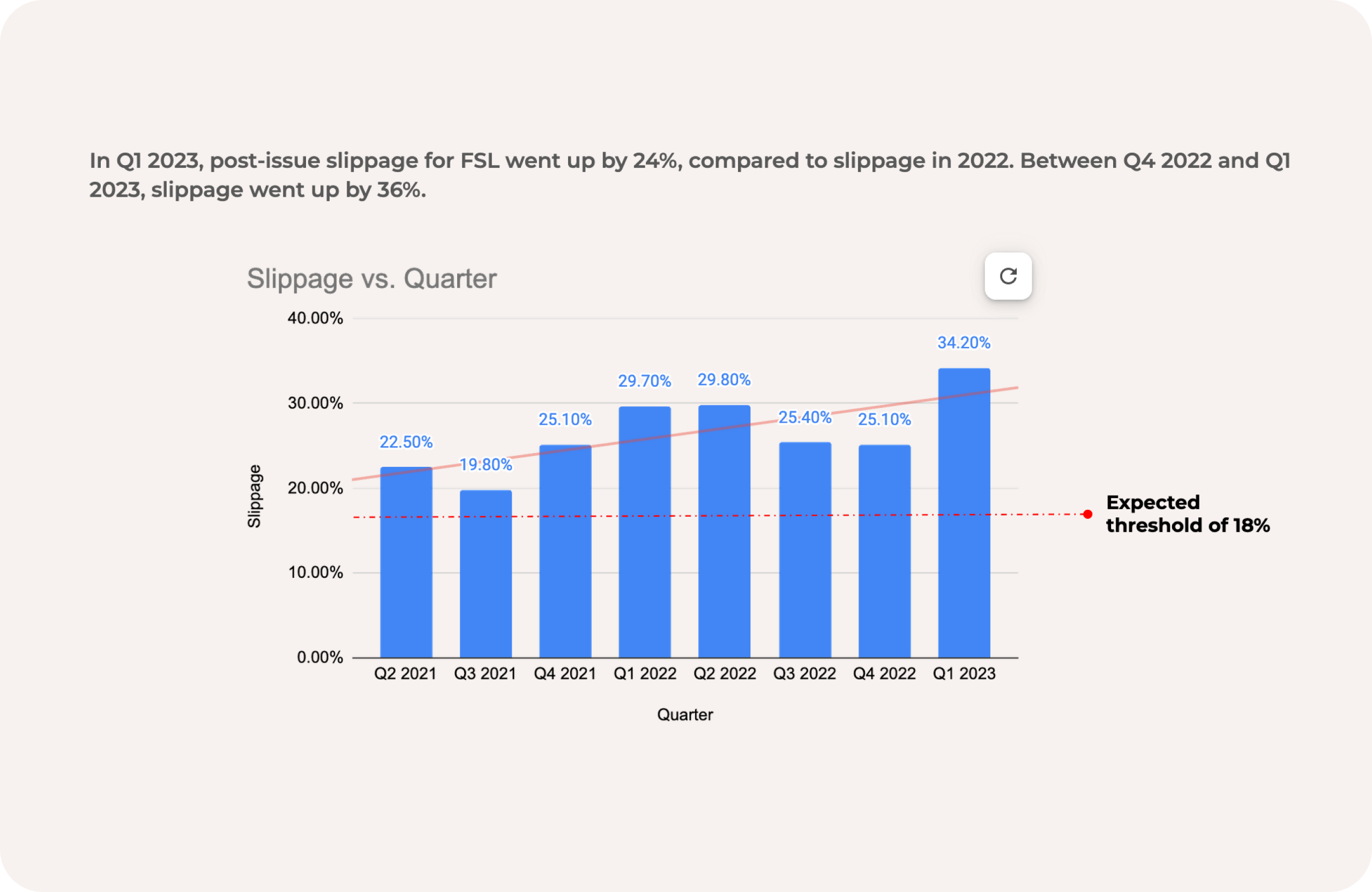

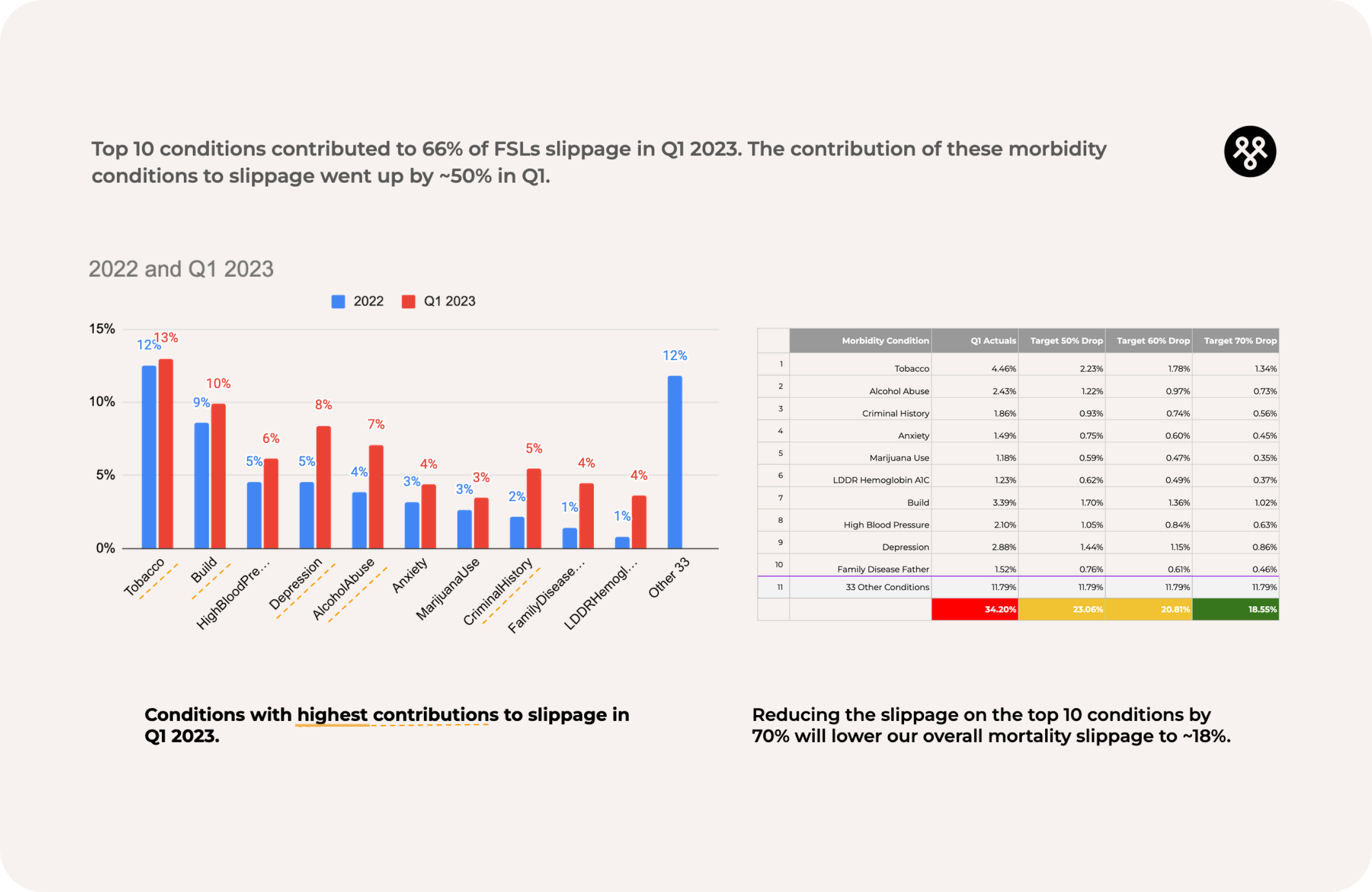

Data science and underwriting flagged that post-issue slippage-differences between automated and human underwriting-rose to 33% in Q1 2023, far above our 18.5% target. Ten conditions accounted for 66% of slippage, with a ~50% rise in impact over the quarter.

This made it clear the issue wasn't broad-it was concentrated. And the largest driver was tobacco and nicotine usage.

Goal-Oriented Research

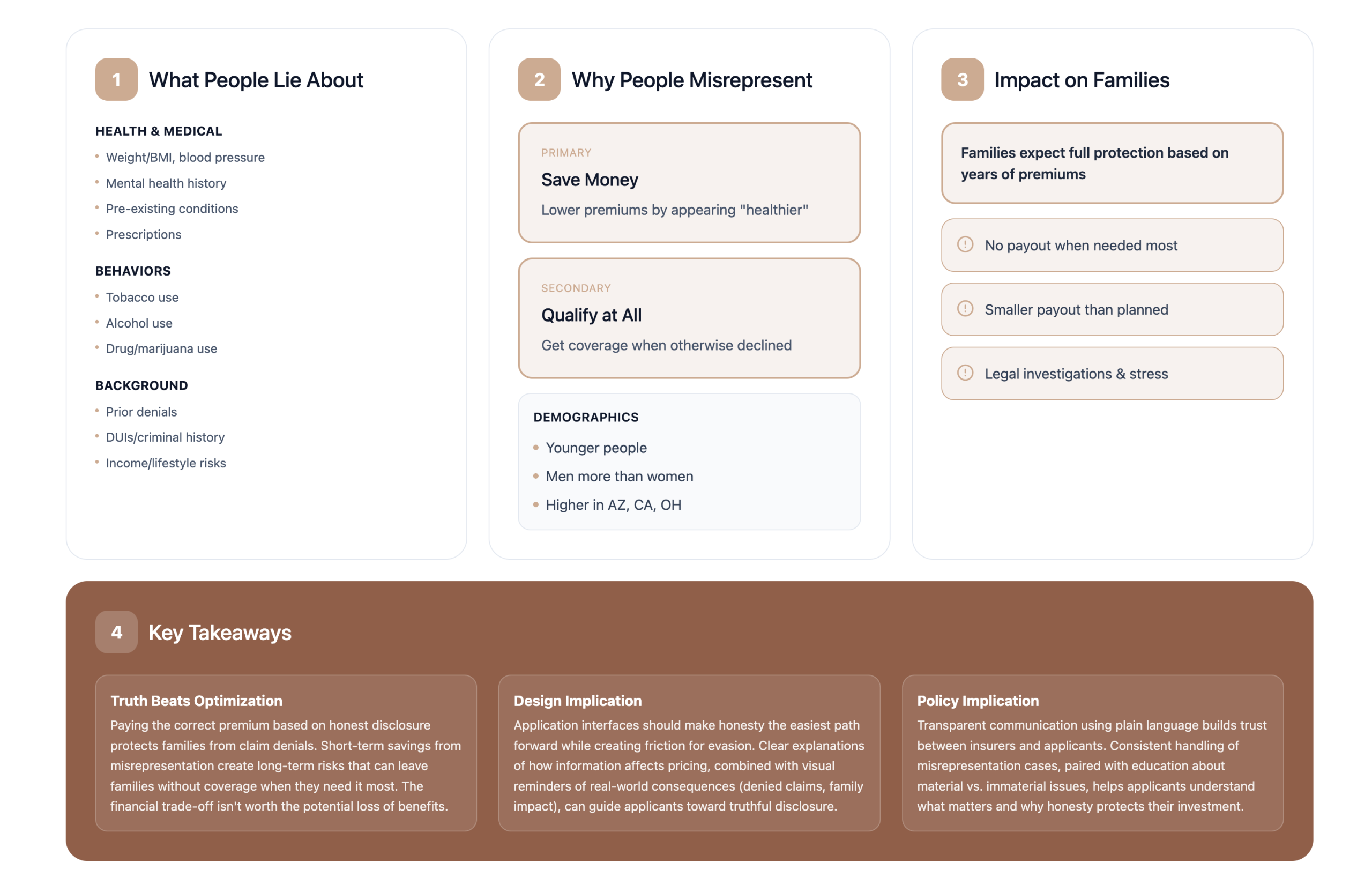

To understand why applicants were misreporting, I conducted targeted research with underwriting, data science, and a content designer.

What we uncovered

- Misrepresentation overwhelmingly came from confusion, not fraud.

- Applicants were unsure how precise their answers should be or how to interpret certain conditions.

- A small minority knowingly "took their chances," assuming details might not be verified.

- Stricter validation would increase abandonment without improving truthfulness.

How this shaped our approach

We shifted from "add more guardrails" to improve clarity, structure, tone, and contextual guidance-especially around sensitive conditions. This allowed us to influence truthful reporting without adding friction.

Scoping the Opportunity

With root causes identified, we mapped opportunities across three pods: rules refinement, model optimization, and our pod-improving disclosability.

We prioritized the top 10 slippage-driving conditions using:

- Underwriting impact

- Confusion likelihood

- Misreporting frequency

- Implementation effort

For sensitivity reasons, this case study focuses on the highest-impact category: tobacco and nicotine usage.

Phase 2 - Create

Evaluating the Existing Toolbox

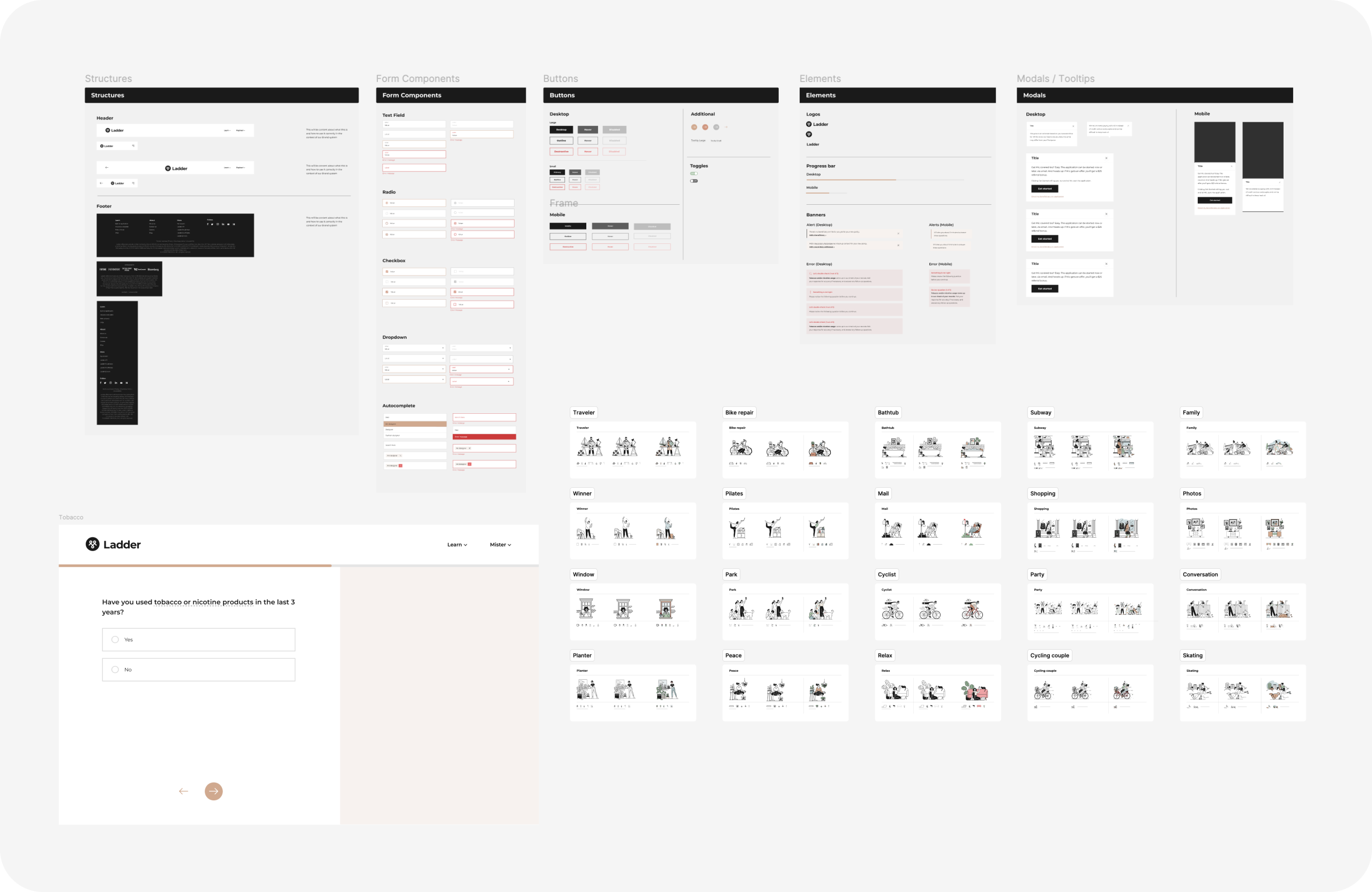

Because of regulatory and timeline constraints, introducing new UI components wasn't feasible. Instead, I audited our existing patterns-inputs, validation rules, and content behaviors-to identify where clarity could be improved without adding friction.

Key insights:

- The primary issue wasn't rules-it was lack of clarity.

- The absence of relatable examples and supportive guidance created anxiety rather than trust.

Working closely with a content designer, we reframed microcopy, structured contextual guidance, and repurposed existing illustrations to make disclosures feel clear, supported, and judgment-free.

This approach laid the foundation for a more trustworthy and intuitive disclosure experience-without introducing new components or regulatory risk.

Proposed Solutions

Guided by research insights, I designed solutions that increased clarity and transparency without slowing applicants down:

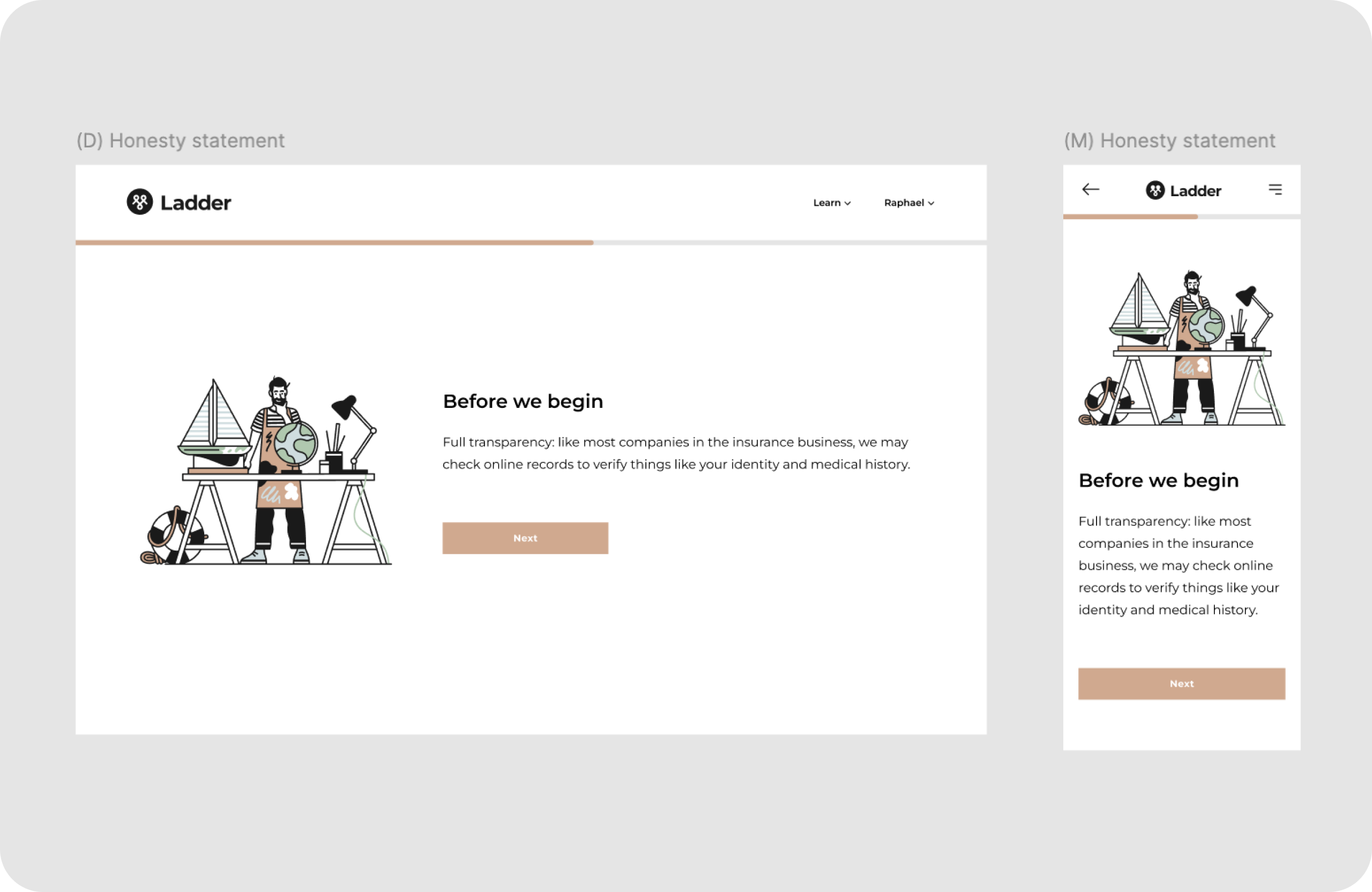

1. Honesty Statement

Set expectations upfront using empathetic, non-punitive language to normalize truthful reporting.

2. Transparent Data Practices

Explained what data we verify and why-deterring intentional misreporting without creating fear or friction.

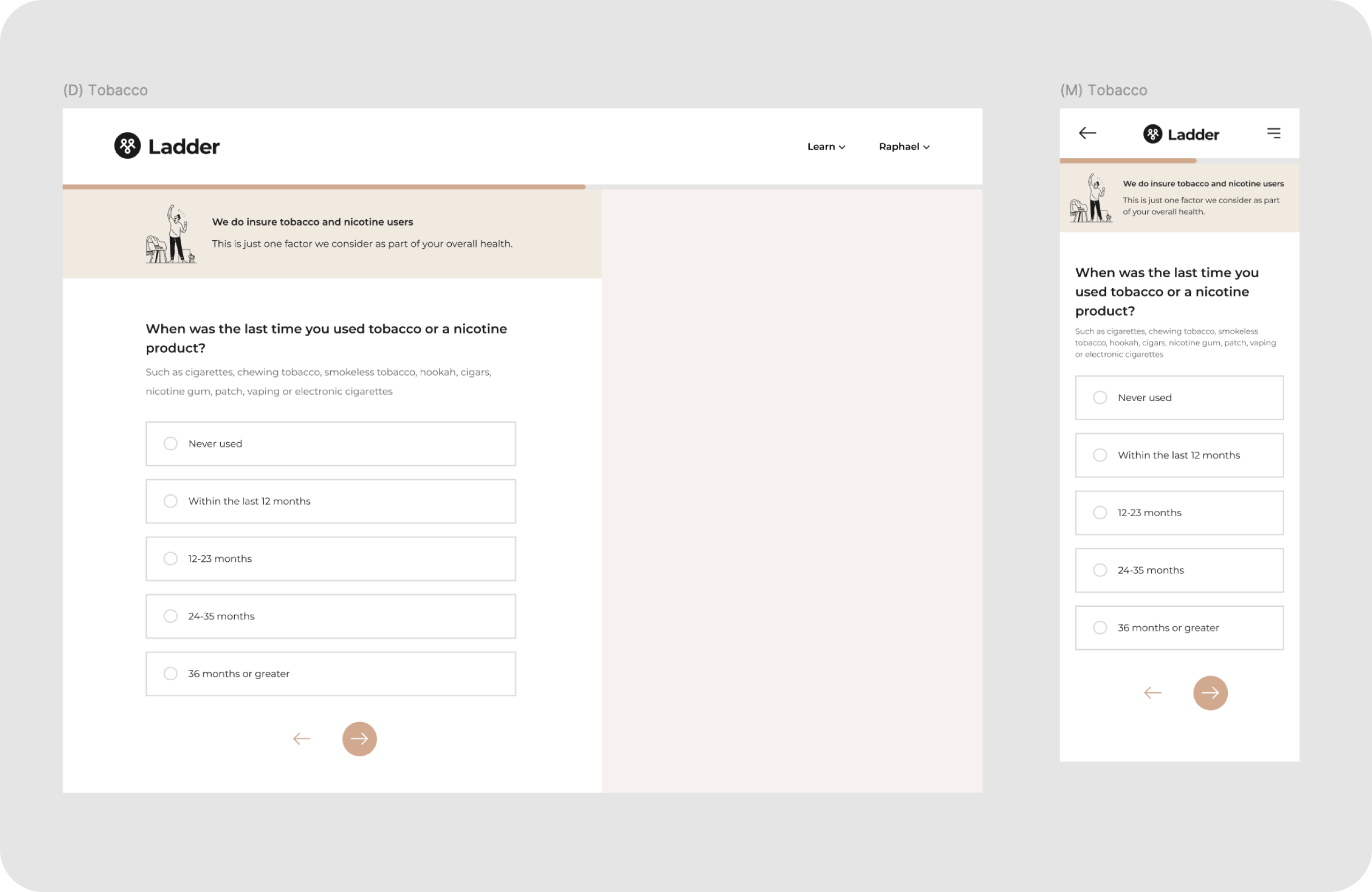

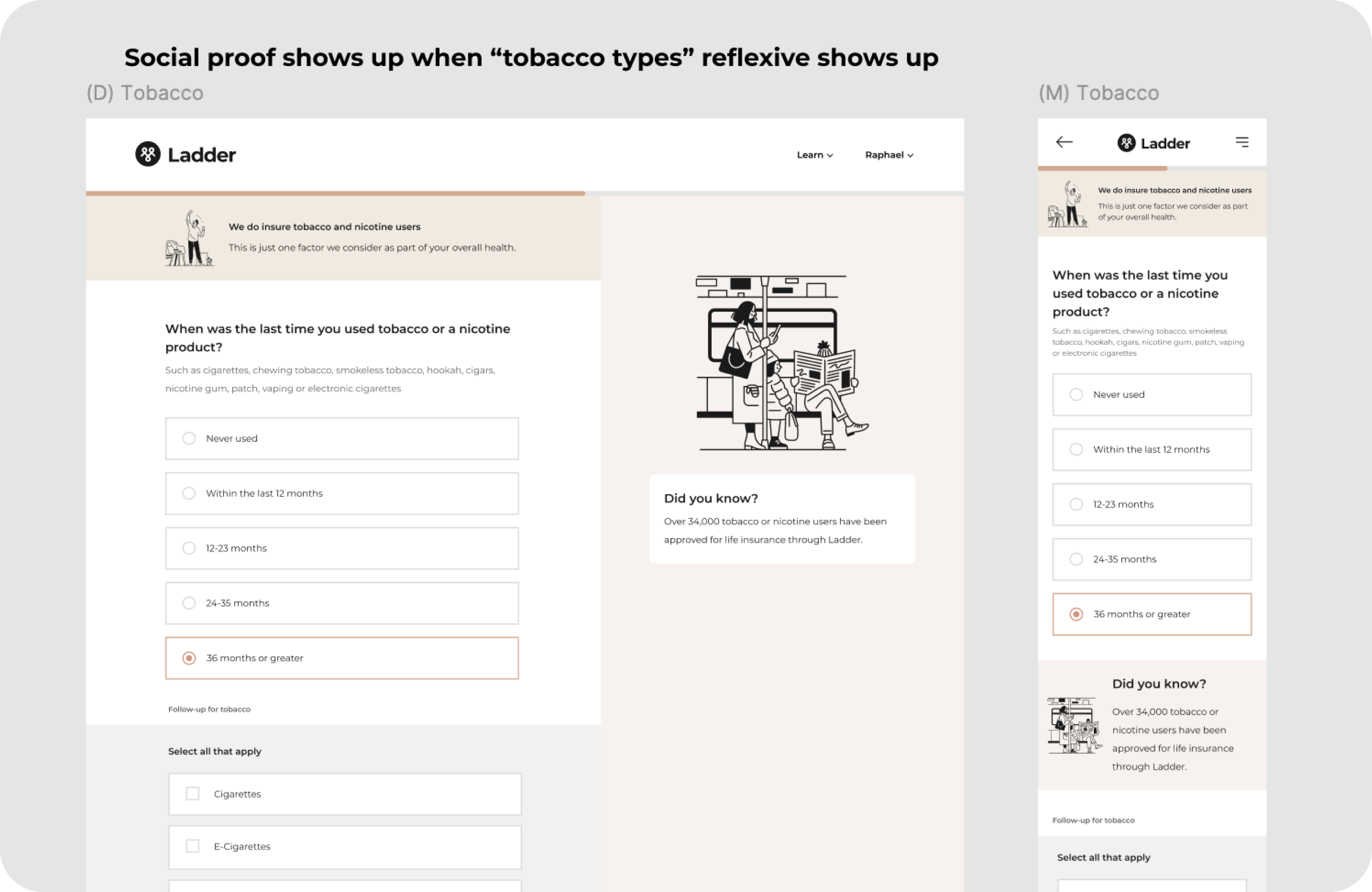

3. Encouraging, supportive examples

Placed contextual examples above inputs, written in a warm tone (e.g., reinforcing that smokers are eligible).

This reduced fear of penalty and made applicants more comfortable reporting truthfully.

Together, these changes aligned applicants with the correct risk class earlier, reducing slippage and downstream manual review.

Experimentation

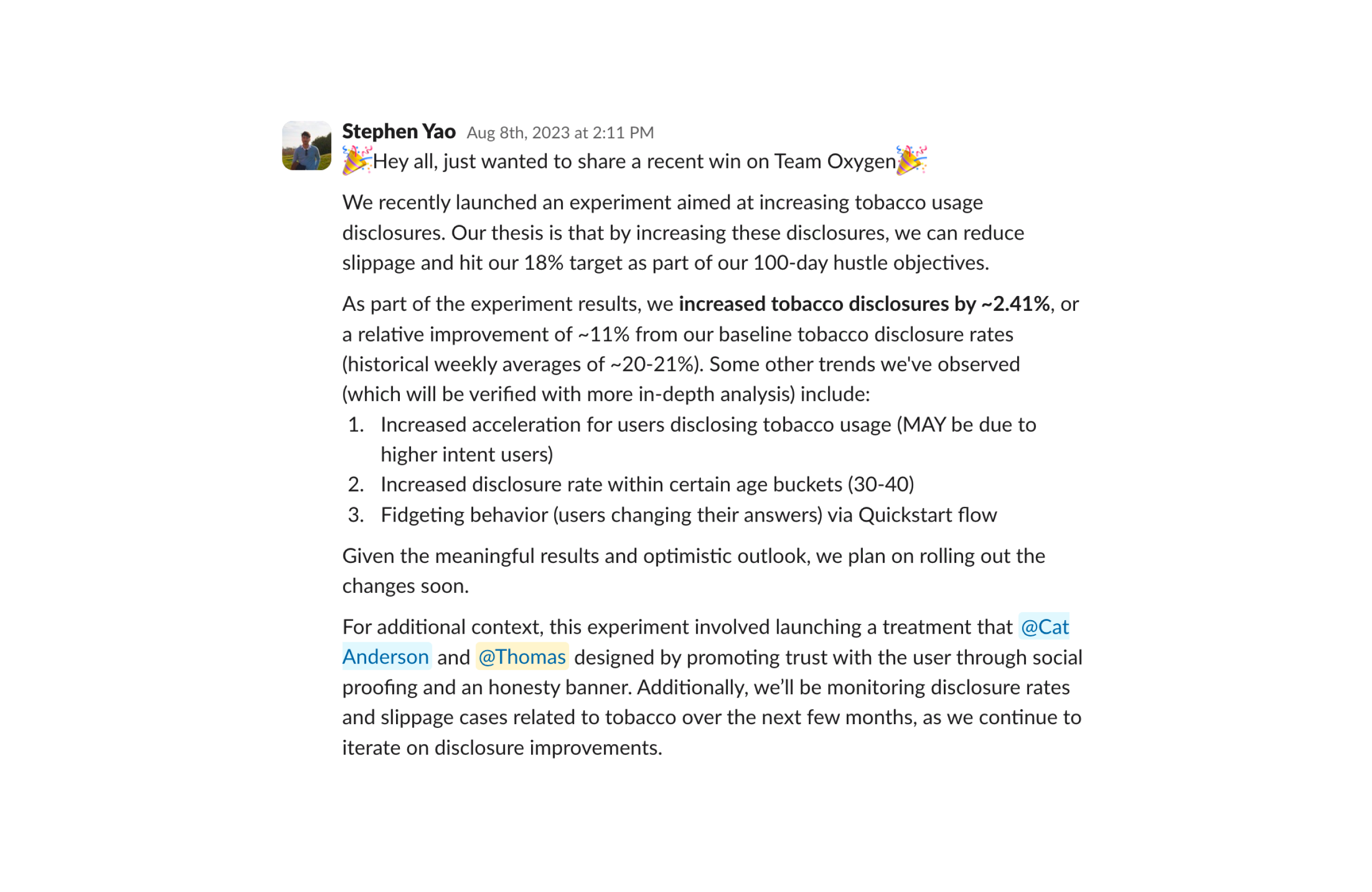

We validated the new patterns through A/B testing:

- The honesty statement and social-proof treatments increased tobacco and nicotine disclosure by 11.7% relative lift.

- No negative impact on conversion, despite the sensitivity of the category.

This confirmed that clarity and encouragement-not friction-drive truthfulness.

Phase 3 - Reflect

True Impact

By Q3 2023, the initiative produced strong, measurable business and user outcomes:

- +9% improvement in substance disclosures

- +12.9% improvement in answer accuracy on the review page

- +10.5% increase in policies issued

- Slippage reduced to 15.3%, beating our 18.6% target

These outcomes confirmed the broader insight:

Clear, supportive design increases truthfulness, which reduces slippage and improves long-term risk accuracy.

This strengthens profitability, aligns applicants with the correct risk class, and reduces exposure for reinsurers.

Reflection & Key Learnings

The pivotal moment in this project was a research finding that reframed everything: most misrepresentation came from confusion, not fraud. The instinct across underwriting and compliance was to add guardrails. My job was to make the case for clarity instead, then find a way to deliver it within serious constraints.

That second part was harder than it sounds. Regulatory and timeline requirements meant we couldn't introduce new UI components: no new patterns, no new flows. Every solution had to come from what already existed: microcopy, tone, contextual examples, the framing of questions already in the product. Solving a high-stakes accuracy problem entirely within those limits forced a level of precision I wouldn't have found otherwise. A single reframed sentence, telling applicants that smokers are eligible rather than implying they'd be penalized for honesty, moved the needle more than a new component ever could have.

What I carry forward: constraints are often where the most interesting design thinking happens. When you can't add, you have to understand the problem deeply enough to find what's already there and use it differently. Slippage falling to 15.3%, below our 18.6% target, confirmed that the right reframe, applied to the right moment, is worth more than a new component.

Next Steps

In Q4 2023, I handed the initiative off to a strong successor following a company reorganization. I continue to advise when needed.

While NDAs prevent me from sharing details, the team is building on this foundation with new innovations that will take the experience and accuracy even further.